Documentation Index

Fetch the complete documentation index at: https://docs.crewai.com/llms.txt

Use this file to discover all available pages before exploring further.

Integrate Langfuse with CrewAI

This notebook demonstrates how to integrate Langfuse with CrewAI using OpenTelemetry via the OpenLit SDK. By the end of this notebook, you will be able to trace your CrewAI applications with Langfuse for improved observability and debugging.What is Langfuse? Langfuse is an open-source LLM engineering platform. It provides tracing and monitoring capabilities for LLM applications, helping developers debug, analyze, and optimize their AI systems. Langfuse integrates with various tools and frameworks via native integrations, OpenTelemetry, and APIs/SDKs.

Get Started

We’ll walk through a simple example of using CrewAI and integrating it with Langfuse via OpenTelemetry using OpenLit.Step 1: Install Dependencies

Step 2: Set Up Environment Variables

Set your Langfuse API keys and configure OpenTelemetry export settings to send traces to Langfuse. Please refer to the Langfuse OpenTelemetry Docs for more information on the Langfuse OpenTelemetry endpoint/api/public/otel and authentication.

Step 3: Initialize OpenLit

Initialize the OpenLit OpenTelemetry instrumentation SDK to start capturing OpenTelemetry traces.Step 4: Create a Simple CrewAI Application

We’ll create a simple CrewAI application where multiple agents collaborate to answer a user’s question.Step 5: See Traces in Langfuse

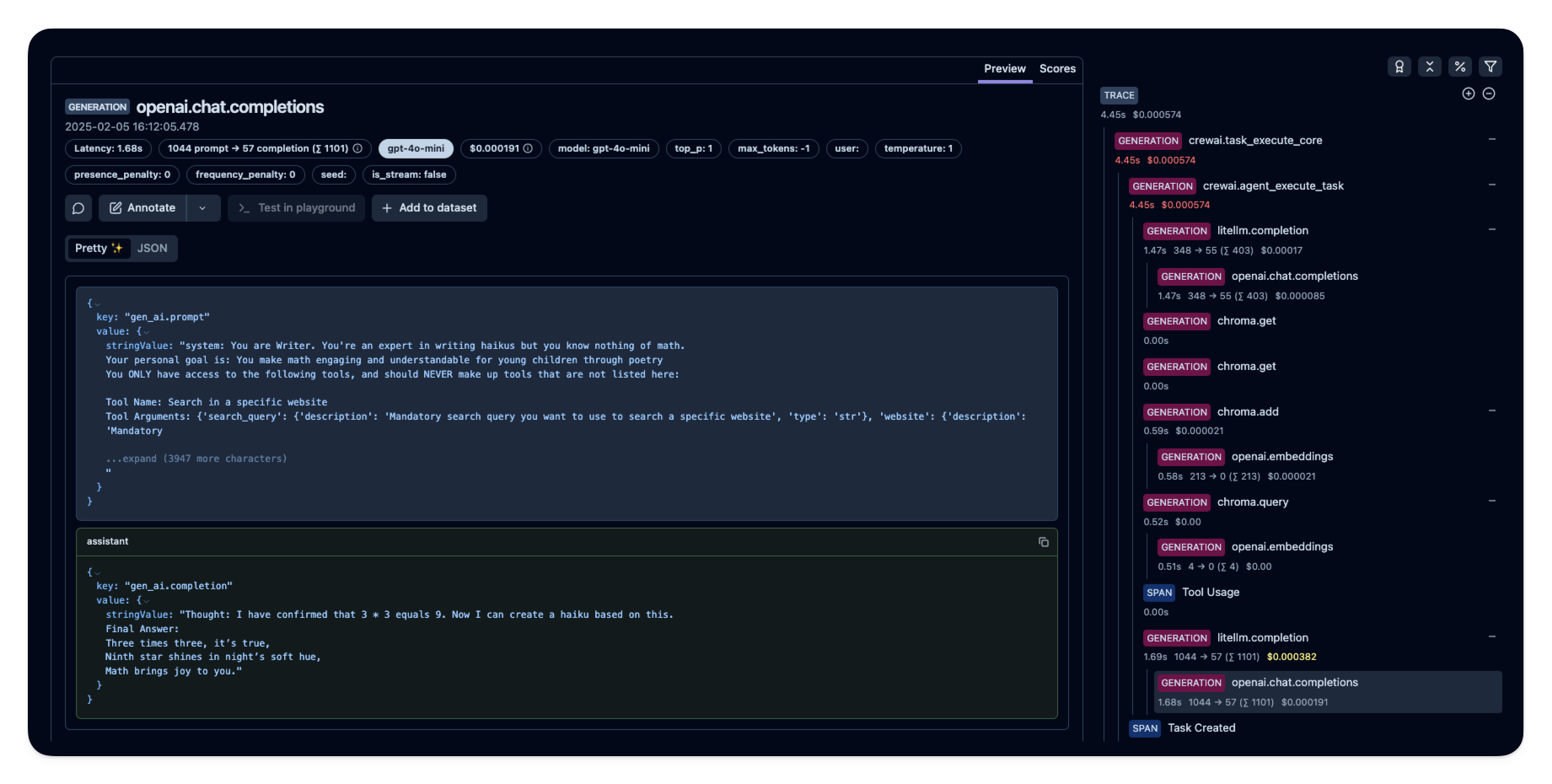

After running the agent, you can view the traces generated by your CrewAI application in Langfuse. You should see detailed steps of the LLM interactions, which can help you debug and optimize your AI agent. Public example trace in Langfuse

Public example trace in Langfuse