Weave Overview

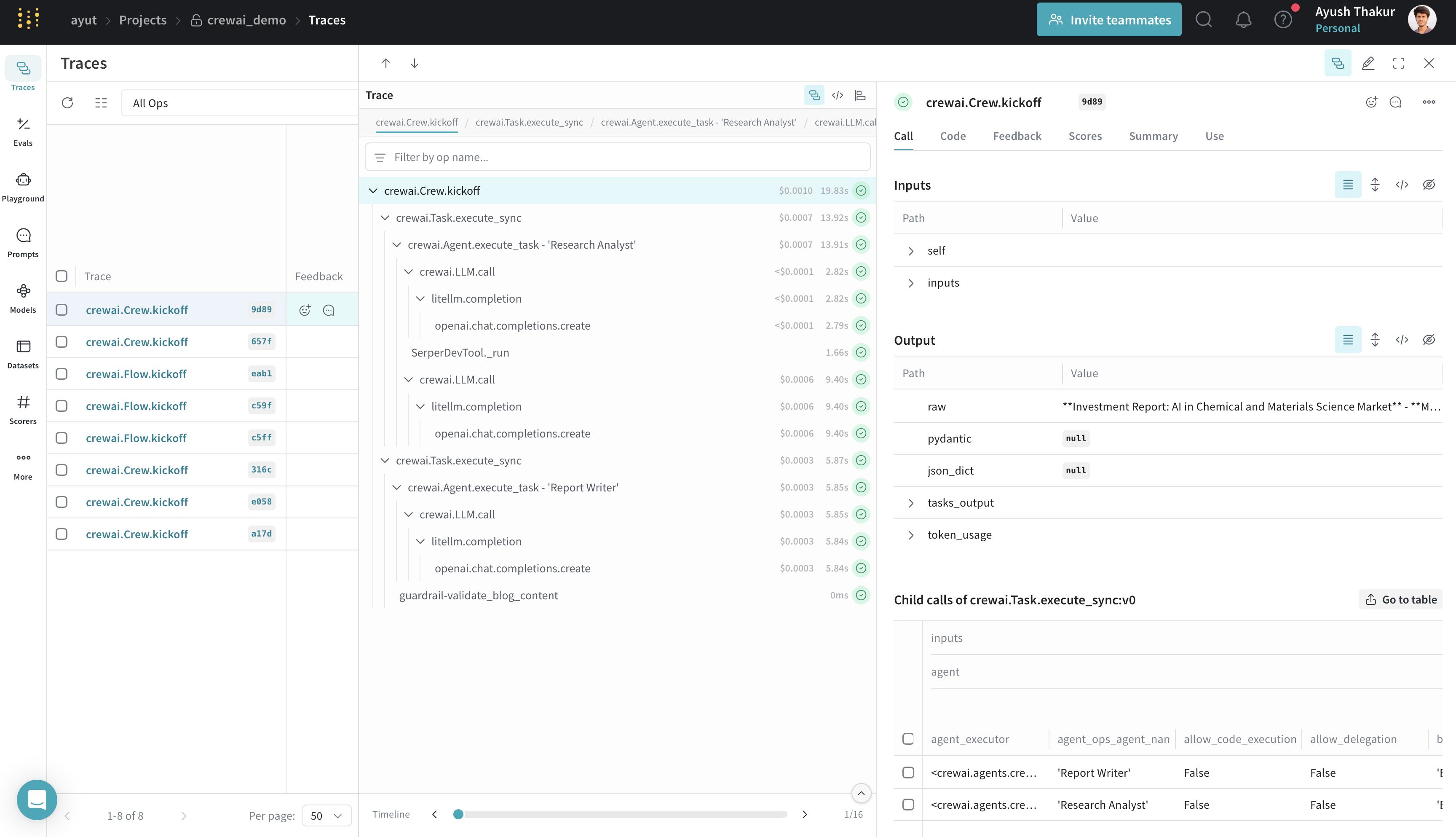

Weights & Biases (W&B) Weave is a framework for tracking, experimenting with, evaluating, deploying, and improving LLM-based applications.

- Tracing & Monitoring: Automatically track LLM calls and application logic to debug and analyze production systems

- Systematic Iteration: Refine and iterate on prompts, datasets, and models

- Evaluation: Use custom or pre-built scorers to systematically assess and enhance agent performance

- Guardrails: Protect your agents with pre- and post-safeguards for content moderation and prompt safety

Setup Instructions

Set up W&B Account

Sign up for a Weights & Biases account if you haven’t already. You’ll need this to view your traces and metrics.

Initialize Weave in Your Application

Add the following code to your application:After initialization, Weave will provide a URL where you can view your traces and metrics.

Features

- Weave automatically captures all CrewAI operations: agent interactions and task executions; LLM calls with metadata and token usage; tool usage and results.

- The integration supports all CrewAI execution methods:

kickoff(),kickoff_for_each(),kickoff_async(), andkickoff_for_each_async(). - Automatic tracing of all crewAI-tools.

- Flow feature support with decorator patching (

@start,@listen,@router,@or_,@and_). - Track custom guardrails passed to CrewAI

Taskwith@weave.op().