Documentation Index

Fetch the complete documentation index at: https://docs.crewai.com/llms.txt

Use this file to discover all available pages before exploring further.

Overview of an Agent

In the CrewAI framework, anAgent is an autonomous unit that can:

- Perform specific tasks

- Make decisions based on its role and goal

- Use tools to accomplish objectives

- Communicate and collaborate with other agents

- Maintain memory of interactions

- Delegate tasks when allowed

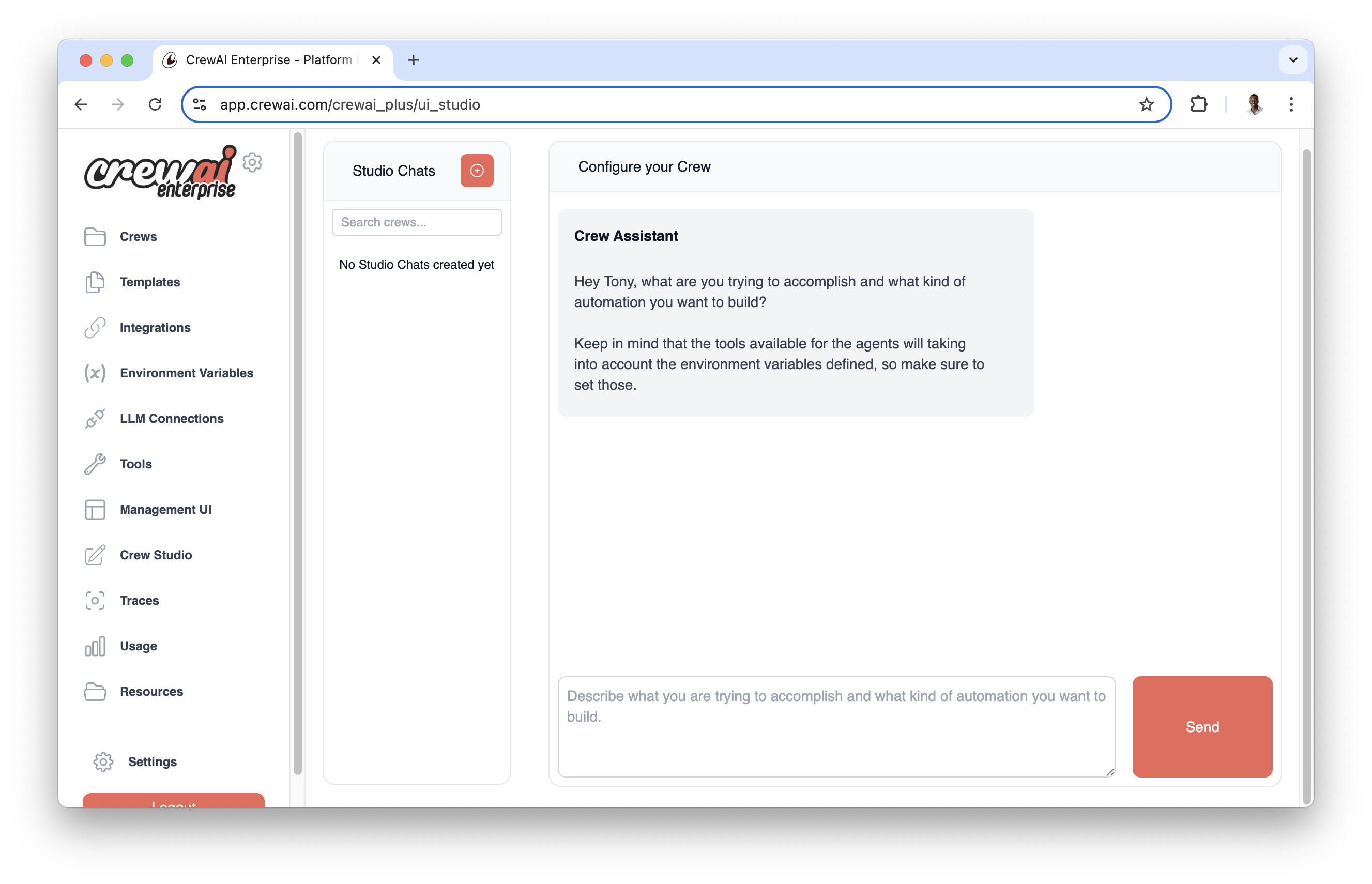

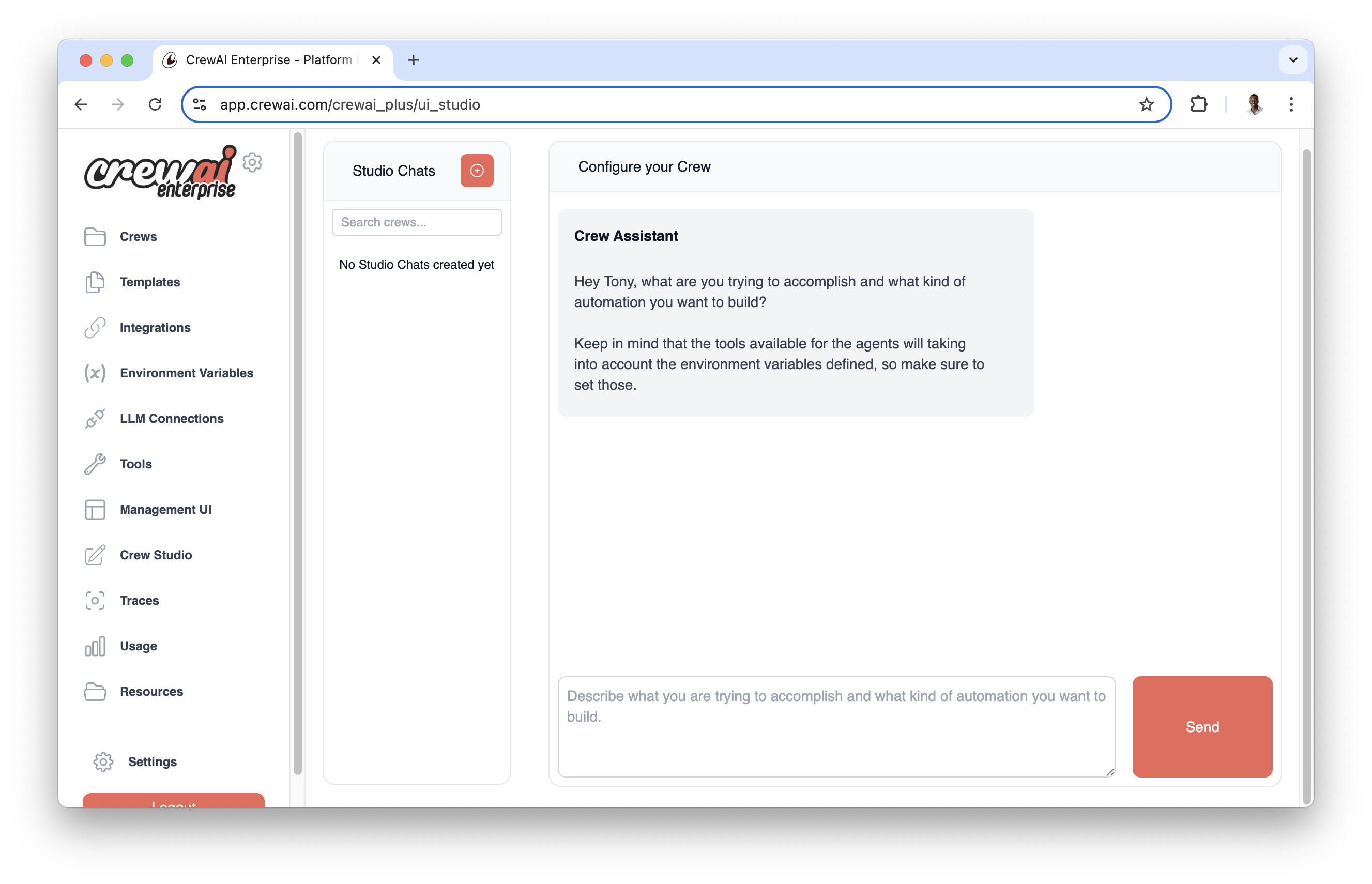

CrewAI AMP includes a Visual Agent Builder that simplifies agent creation and configuration without writing code. Design your agents visually and test them in real-time.

- Intuitive agent configuration with form-based interfaces

- Real-time testing and validation

- Template library with pre-configured agent types

- Easy customization of agent attributes and behaviors

Agent Attributes

| Attribute | Parameter | Type | Description |

|---|---|---|---|

| Role | role | str | Defines the agent’s function and expertise within the crew. |

| Goal | goal | str | The individual objective that guides the agent’s decision-making. |

| Backstory | backstory | str | Provides context and personality to the agent, enriching interactions. |

| LLM (optional) | llm | Union[str, LLM, Any] | Language model that powers the agent. Defaults to the model specified in OPENAI_MODEL_NAME or “gpt-4”. |

| Tools (optional) | tools | List[BaseTool] | Capabilities or functions available to the agent. Defaults to an empty list. |

| Function Calling LLM (optional) | function_calling_llm | Optional[Any] | Language model for tool calling, overrides crew’s LLM if specified. |

| Max Iterations (optional) | max_iter | int | Maximum iterations before the agent must provide its best answer. Default is 20. |

| Max RPM (optional) | max_rpm | Optional[int] | Maximum requests per minute to avoid rate limits. |

| Max Execution Time (optional) | max_execution_time | Optional[int] | Maximum time (in seconds) for task execution. |

| Verbose (optional) | verbose | bool | Enable detailed execution logs for debugging. Default is False. |

| Allow Delegation (optional) | allow_delegation | bool | Allow the agent to delegate tasks to other agents. Default is False. |

| Step Callback (optional) | step_callback | Optional[Any] | Function called after each agent step, overrides crew callback. |

| Cache (optional) | cache | bool | Enable caching for tool usage. Default is True. |

| System Template (optional) | system_template | Optional[str] | Custom system prompt template for the agent. |

| Prompt Template (optional) | prompt_template | Optional[str] | Custom prompt template for the agent. |

| Response Template (optional) | response_template | Optional[str] | Custom response template for the agent. |

| Allow Code Execution (optional) | allow_code_execution | Optional[bool] | Enable code execution for the agent. Default is False. |

| Max Retry Limit (optional) | max_retry_limit | int | Maximum number of retries when an error occurs. Default is 2. |

| Respect Context Window (optional) | respect_context_window | bool | Keep messages under context window size by summarizing. Default is True. |

| Code Execution Mode (optional) | code_execution_mode | Literal["safe", "unsafe"] | Mode for code execution: ‘safe’ (using Docker) or ‘unsafe’ (direct). Default is ‘safe’. |

| Multimodal (optional) | multimodal | bool | Whether the agent supports multimodal capabilities. Default is False. |

| Inject Date (optional) | inject_date | bool | Whether to automatically inject the current date into tasks. Default is False. |

| Date Format (optional) | date_format | str | Format string for date when inject_date is enabled. Default is “%Y-%m-%d” (ISO format). |

| Reasoning (optional) | reasoning | bool | Whether the agent should reflect and create a plan before executing a task. Default is False. |

| Max Reasoning Attempts (optional) | max_reasoning_attempts | Optional[int] | Maximum number of reasoning attempts before executing the task. If None, will try until ready. |

| Embedder (optional) | embedder | Optional[Dict[str, Any]] | Configuration for the embedder used by the agent. |

| Knowledge Sources (optional) | knowledge_sources | Optional[List[BaseKnowledgeSource]] | Knowledge sources available to the agent. |

| Use System Prompt (optional) | use_system_prompt | Optional[bool] | Whether to use system prompt (for o1 model support). Default is True. |

Creating Agents

There are two ways to create agents in CrewAI: using YAML configuration (recommended) or defining them directly in code.YAML Configuration (Recommended)

Using YAML configuration provides a cleaner, more maintainable way to define agents. We strongly recommend using this approach in your CrewAI projects. After creating your CrewAI project as outlined in the Installation section, navigate to thesrc/latest_ai_development/config/agents.yaml file and modify the template to match your requirements.

Variables in your YAML files (like

{topic}) will be replaced with values from your inputs when running the crew:Code

agents.yaml

CrewBase:

Code

The names you use in your YAML files (

agents.yaml) should match the method

names in your Python code.Direct Code Definition

You can create agents directly in code by instantiating theAgent class. Here’s a comprehensive example showing all available parameters:

Code

Basic Research Agent

Code

Code Development Agent

Code

Long-Running Analysis Agent

Code

Custom Template Agent

Code

Date-Aware Agent with Reasoning

Code

Reasoning Agent

Code

Multimodal Agent

Code

Parameter Details

Critical Parameters

role,goal, andbackstoryare required and shape the agent’s behaviorllmdetermines the language model used (default: OpenAI’s GPT-4)

Memory and Context

memory: Enable to maintain conversation historyrespect_context_window: Prevents token limit issuesknowledge_sources: Add domain-specific knowledge bases

Execution Control

max_iter: Maximum attempts before giving best answermax_execution_time: Timeout in secondsmax_rpm: Rate limiting for API callsmax_retry_limit: Retries on error

Code Execution

allow_code_execution(deprecated): Previously enabled built-in code execution viaCodeInterpreterTool.code_execution_mode(deprecated): Previously controlled execution mode ("safe"for Docker,"unsafe"for direct execution).

Advanced Features

multimodal: Enable multimodal capabilities for processing text and visual contentreasoning: Enable agent to reflect and create plans before executing tasksinject_date: Automatically inject current date into task descriptions

Templates

system_template: Defines agent’s core behaviorprompt_template: Structures input formatresponse_template: Formats agent responses

When using custom templates, ensure that both

system_template and

prompt_template are defined. The response_template is optional but

recommended for consistent output formatting.When using custom templates, you can use variables like

{role}, {goal},

and {backstory} in your templates. These will be automatically populated

during execution.Agent Tools

Agents can be equipped with various tools to enhance their capabilities. CrewAI supports tools from: Here’s how to add tools to an agent:Code

Agent Memory and Context

Agents can maintain memory of their interactions and use context from previous tasks. This is particularly useful for complex workflows where information needs to be retained across multiple tasks.Code

When

memory is enabled, the agent will maintain context across multiple

interactions, improving its ability to handle complex, multi-step tasks.Context Window Management

CrewAI includes sophisticated automatic context window management to handle situations where conversations exceed the language model’s token limits. This powerful feature is controlled by therespect_context_window parameter.

How Context Window Management Works

When an agent’s conversation history grows too large for the LLM’s context window, CrewAI automatically detects this situation and can either:- Automatically summarize content (when

respect_context_window=True) - Stop execution with an error (when

respect_context_window=False)

Automatic Context Handling (respect_context_window=True)

This is the default and recommended setting for most use cases. When enabled, CrewAI will:

Code

- ⚠️ Warning message:

"Context length exceeded. Summarizing content to fit the model context window." - 🔄 Automatic summarization: CrewAI intelligently summarizes the conversation history

- ✅ Continued execution: Task execution continues seamlessly with the summarized context

- 📝 Preserved information: Key information is retained while reducing token count

Strict Context Limits (respect_context_window=False)

When you need precise control and prefer execution to stop rather than lose any information:

Code

- ❌ Error message:

"Context length exceeded. Consider using smaller text or RAG tools from crewai_tools." - 🛑 Execution stops: Task execution halts immediately

- 🔧 Manual intervention required: You need to modify your approach

Choosing the Right Setting

Use respect_context_window=True (Default) when:

- Processing large documents that might exceed context limits

- Long-running conversations where some summarization is acceptable

- Research tasks where general context is more important than exact details

- Prototyping and development where you want robust execution

Code

Use respect_context_window=False when:

- Precision is critical and information loss is unacceptable

- Legal or medical tasks requiring complete context

- Code review where missing details could introduce bugs

- Financial analysis where accuracy is paramount

Code

Alternative Approaches for Large Data

When dealing with very large datasets, consider these strategies:1. Use RAG Tools

Code

2. Use Knowledge Sources

Code

Context Window Best Practices

- Monitor Context Usage: Enable

verbose=Trueto see context management in action - Design for Efficiency: Structure tasks to minimize context accumulation

- Use Appropriate Models: Choose LLMs with context windows suitable for your tasks

- Test Both Settings: Try both

TrueandFalseto see which works better for your use case - Combine with RAG: Use RAG tools for very large datasets instead of relying solely on context windows

Troubleshooting Context Issues

If you’re getting context limit errors:Code

Code

The context window management feature works automatically in the background.

You don’t need to call any special functions - just set

respect_context_window to your preferred behavior and CrewAI handles the

rest!Direct Agent Interaction with kickoff()

Agents can be used directly without going through a task or crew workflow using the kickoff() method. This provides a simpler way to interact with an agent when you don’t need the full crew orchestration capabilities.

How kickoff() Works

The kickoff() method allows you to send messages directly to an agent and get a response, similar to how you would interact with an LLM but with all the agent’s capabilities (tools, reasoning, etc.).

Code

Parameters and Return Values

| Parameter | Type | Description |

|---|---|---|

messages | Union[str, List[Dict[str, str]]] | Either a string query or a list of message dictionaries with role/content |

response_format | Optional[Type[Any]] | Optional Pydantic model for structured output |

LiteAgentOutput object with the following properties:

raw: String containing the raw output textpydantic: Parsed Pydantic model (if aresponse_formatwas provided)agent_role: Role of the agent that produced the outputusage_metrics: Token usage metrics for the execution

Structured Output

You can get structured output by providing a Pydantic model as theresponse_format:

Code

Multiple Messages

You can also provide a conversation history as a list of message dictionaries:Code

Async Support

An asynchronous version is available viakickoff_async() with the same parameters:

Code

The

kickoff() method uses a LiteAgent internally, which provides a simpler

execution flow while preserving all of the agent’s configuration (role, goal,

backstory, tools, etc.).Important Considerations and Best Practices

Security and Code Execution

Performance Optimization

- Use

respect_context_window: trueto prevent token limit issues - Set appropriate

max_rpmto avoid rate limiting - Enable

cache: trueto improve performance for repetitive tasks - Adjust

max_iterandmax_retry_limitbased on task complexity

Memory and Context Management

- Leverage

knowledge_sourcesfor domain-specific information - Configure

embedderwhen using custom embedding models - Use custom templates (

system_template,prompt_template,response_template) for fine-grained control over agent behavior

Advanced Features

- Enable

reasoning: truefor agents that need to plan and reflect before executing complex tasks - Set appropriate

max_reasoning_attemptsto control planning iterations (None for unlimited attempts) - Use

inject_date: trueto provide agents with current date awareness for time-sensitive tasks - Customize the date format with

date_formatusing standard Python datetime format codes - Enable

multimodal: truefor agents that need to process both text and visual content

Agent Collaboration

- Enable

allow_delegation: truewhen agents need to work together - Use

step_callbackto monitor and log agent interactions - Consider using different LLMs for different purposes:

- Main

llmfor complex reasoning function_calling_llmfor efficient tool usage

- Main

Date Awareness and Reasoning

- Use

inject_date: trueto provide agents with current date awareness for time-sensitive tasks - Customize the date format with

date_formatusing standard Python datetime format codes - Valid format codes include: %Y (year), %m (month), %d (day), %B (full month name), etc.

- Invalid date formats will be logged as warnings and will not modify the task description

- Enable

reasoning: truefor complex tasks that benefit from upfront planning and reflection

Model Compatibility

- Set

use_system_prompt: falsefor older models that don’t support system messages - Ensure your chosen

llmsupports the features you need (like function calling)

Troubleshooting Common Issues

-

Rate Limiting: If you’re hitting API rate limits:

- Implement appropriate

max_rpm - Use caching for repetitive operations

- Consider batching requests

- Implement appropriate

-

Context Window Errors: If you’re exceeding context limits:

- Enable

respect_context_window - Use more efficient prompts

- Clear agent memory periodically

- Enable

-

Code Execution Issues: If code execution fails:

- Verify Docker is installed for safe mode

- Check execution permissions

- Review code sandbox settings

-

Memory Issues: If agent responses seem inconsistent:

- Check knowledge source configuration

- Review conversation history management