Prerequisites

CrewAI AMP account

Your organization must have an active CrewAI AMP account.

OpenTelemetry collector

You need an OpenTelemetry-compatible collector endpoint (e.g., your own OTel Collector, Datadog, Grafana, or any OTLP-compatible backend).

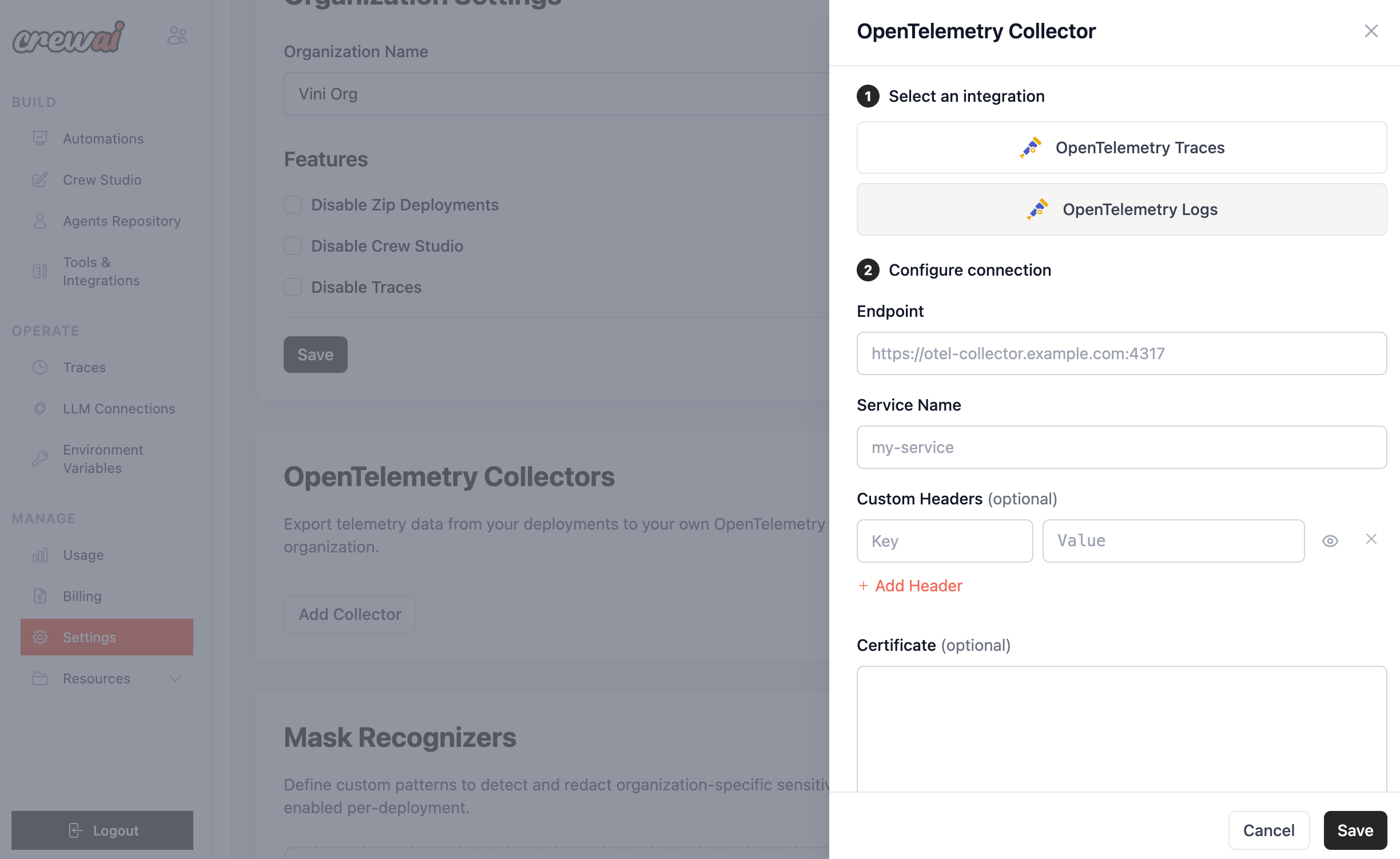

Setting up a collector

- In CrewAI AMP, go to Settings > OpenTelemetry Collectors.

- Click Add Collector.

- Select an integration type — OpenTelemetry Traces or OpenTelemetry Logs.

- Configure the connection:

- Endpoint — Your collector’s OTLP endpoint (e.g.,

https://otel-collector.example.com:4317). - Service Name — A name to identify this service in your observability platform.

- Custom Headers (optional) — Add authentication or routing headers as key-value pairs.

- Certificate (optional) — Provide a TLS certificate if your collector requires one.

- Endpoint — Your collector’s OTLP endpoint (e.g.,

- Click Save.